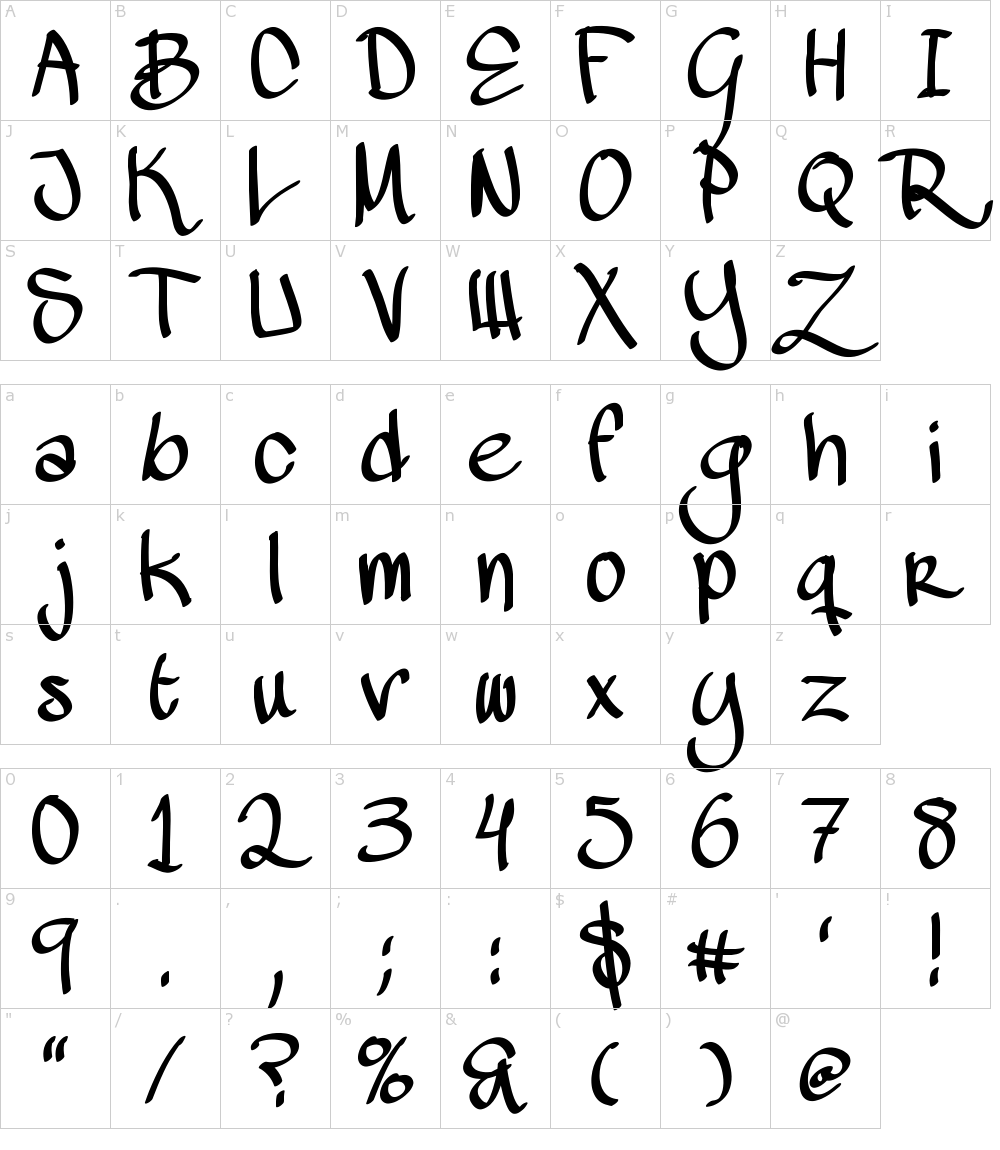

Data : 75,000 images / 150 epochĪt first, the model trains 150epoch from the scratch. Pre-Training processes are inspired and helped by zi2zi project of kaonashi-tyc. Also, the middle-step extracted vectors goes to the pair-vectors which are decoded by Decoder. After the Encoder extracts features of image, the font category vector is concatenated at the end of the feature vector. At the same time, it also predicts the category of the font type. Discrinimator gets Real or Fake images, and calculate the probability of them to be the real image.Generator improves the quality of generated image during evaluated by Discriminator. It has Encoder and Decoder inside, which is the different point from Vanilla GAN. Generator gets Gothic type image for input, and do the style transfer with it.The basic model architecture is GAN, which consists of Generator and Discriminator. Before learning human handwriting, it should be pre-trained on a large amount of digital font character images, and then it does transfer learning with small amounts of human handwritten character images.Īll details about this project can be seen in the blog post. This is a project that trains GAN-based model with the human handwriting, and generates the character images that reflect their styles. Left : Generated Image / Right : Ground Truth

한글 버전 My Handwriting Styler, with GAN & U-Net

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

February 2023

Categories |

RSS Feed

RSS Feed